ARS

work packages

objectives of the project

Objective 01 - Analysis and representation with abstract models

The first scientific objective of the ARS project will be to fully analyze and formally represent real surgical interventions with abstract models, integrating a priori knowledge from textbooks with the structures identified by big data analysis. This objective will allow identifying not only the intervention details, but also the reasoning during surgery and action motivations, by comparing interventions done by different surgeons.

Another key aspect of this objective is environment modeling, since data will support the identification of structures and properties of the anatomy and the creation of realistic phantoms. From this analysis we will develop the intervention specification to be verified during the demonstration phase.

Objective 02 - Intervention planning

The second scientific objective of the project will be to develop methods to plan an intervention for a specific anatomy. Task planning will overcome the combinatorial explosion by instantiating the intervention model to the patient specific anatomy, thus limiting the number of possible choices. However, since not all steps can be planned in advance because of the changes occurring during the intervention, a key aspect of this objective will be on-line and reactive planning.

Objective 03 - Real time control

The third scientific objective of the project is to develop methods for the real time control of the surgical instruments during the execution of the intervention. Hybrid controller will be designed to account for the discrete evolution of the intervention and the continuous tool motions. Furthermore, since instruments must be localized with respect to the patient anatomy, an important aspect of this objective is the

identification of the organ positions in the surgical area and their biomechanical properties.

Objective 04 - Situation awareness and reasoning methods

The fourth and most ambitious scientific objective of the ARS project is the development of situation awareness and reasoning methods capable to handle a real surgical intervention. This objective will apply the reasoning methods and the control policies identified in the Caltech COH – City of Hope Cancer Center big data to the intervention execution and we will develop methods to learn from a single surgical procedure. When an unknown situation occurs, the clinical data will be searched for a match, to make sure that details have not been missed. If this fails, the control will be transferred to the surgeon as in a normal surgical intervention.

Objective 05 - Autonomous execution demonstration

The fifth and final objective of this project is to demonstrate the autonomous execution of a representative surgical intervention using the DVRK setup and a patient specific physical phantom.

This objective will first aim at improving the hardware setup and addressing the robot safety and security. Then we will verify that the autonomous execution meets the intervention requirements extracted by the COH data, and we will validate the intervention by comparing the kinematic and video data with the COH files. Finally, we will measure the quality of the autonomous execution, by developing specific benchmarks.

METHODOLOGY

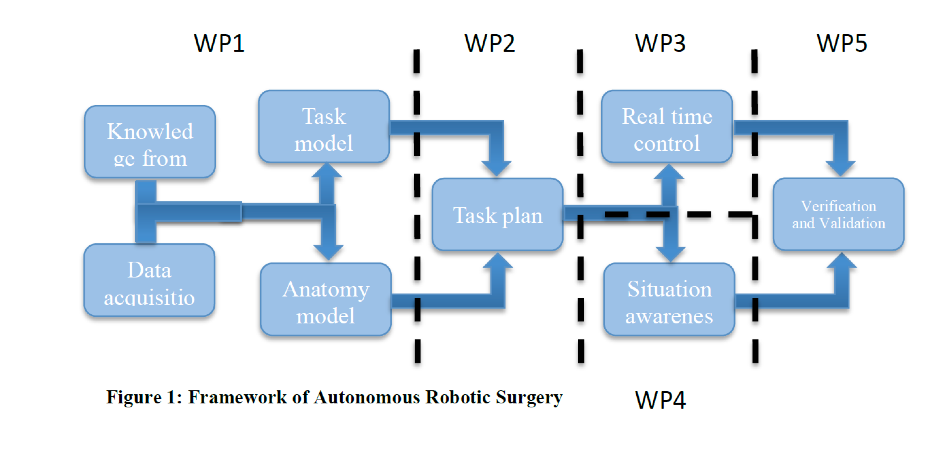

The project is organized into five main Work Packages (WP) one for each of the research objectives. The structure of the project is shown in the figure below.

WP1 addressing the development of models and abstractions of the clinical data;

WP2 developing the plan of a new intervention;

WP3 concerning the real time control of the intervention;

WP4 focusing on real time situation awareness and reasoning;

WP5 addressing the integration of the algorithms in the DVRK and the demonstration of the project capabilities.

WP6 management and communication.

WORK PACKAGES

In the following, each WP will be described at the detail level currently possible, considering that we will find many unexpected facts during the data analysis in WP1.

WP 1 – Data Processing and Model Building

In this WP we will analyze the COH-Caltech data set to extract as much information as possible regarding the surgical procedures.

Furthermore, we will build the patient specific anatomic models, virtual and physical, that will permit to test the intervention in simulation and with the real robot: the physical models, i.e. phantoms, will be the “patients” of our demonstrations. WP1 will be organized in three main tasks and its main results will be the hybrid automata modeling the COH interventions, the virtual and

physical models of the surgical areas, and the intervention analysis using formal methods.

Tasks

WP1 will be organized in three main tasks and its main results will be:

- The hybrid automata modeling the COH interventions;

- The virtual and physical models of the surgical areas;

- The intervention analysis using formal methods.

WP 2 – intervention planning

In this WP we will develop an approach to surgical planning that should be as similar as possible to a real robotic surgery.

We assume that the models of the intervention (AA, SAA, HA) are available as our background knowledge, and that the preoperative images of the patient are available as well. As described in WP1, we will use the COH stereo images to develop the Virtual (VM) and Physical (PM) models of a specific intervention, which will be used for the validation of the intervention plan.

We will develop the intervention plan using only the preoperative images of the human patient, as human surgeons do, from which we will derive the Pre-Operative Model (POM) of the surgical areas. Thus the POM will be used for planning and testing activities as in real surgeries, whereas the PM, i.e. the phantom of the surgical area, will be the “patient” of the autonomous surgery. To test the algorithm robustness, we will exclude one intervention from this process and we may use the relative PM as an unknown patient for the autonomous intervention.

Tasks

WP2 will be organized in three main tasks:

- the first addressing POM development and intervention simulation;

- the second planning the intervention;

- the third task verifying the plan using Formal Methods and Simulation.The plan will instantiate the intervention automata for the specific patient, including additional actions with instruments held by surgical assistants.

WP3: Real Time Task Control

In this WP we will follow the steps of the real robot assisted intervention.

Using the Physical Model (PM) as our patient, the Pre-Operative Model (POM) as our understanding of the patient anatomy, and the plan to carry out the intervention. The plan consists of the patient-specific instantiation of the three intervention automata and during the procedure we will execute the Action Automata (AA), and of the sensory data acquisition for the Situation Awareness Automata (SAA) and the Housekeeping Automata (HA).

Tasks

WP3 will be organized in four main tasks:

- the first addressing the registration of POM with the Physical Model (PM);

- the second addressing the localization of the surgical instruments in the PM;

- the third task addressing the execution of the intervention plan;

- and finally the last task addressing housekeeping activities, such as model parameter measurements and learning from own actions.

WP4: Real Time Reasoning and Situation Awareness

In this WP we will include all the tasks related to real time learning and reasoning about current and future status of the intervention.

As mentioned above, the surgical robot is carrying out the intervention plan based on the outcome of the Situation Awareness Automaton (SAA) that identifies the intervention state, and analyzes the hypotheses on the current situation. The SAA will have a two-level reasoning structure: a lower level addressing state identification and processing of data for the state transition, and a higher level process assessing the overall intervention situation.

In this WP we will also attempt to deal with unexpected situations, either by activating a planned emergency reaction, or by further examining the data or by asking the human surgeon to continue the intervention. Learning from the current execution will also be done here, to account for identified differences with the models.

Tasks

WP4 will be organized in four main tasks:

- the first for sensor data acquisition and processing;

- the second for state identification;

- the third task for situation assessment;

- and the last task to address unknown situations.

WP5: Verification and Validation of the Results

This fifth research task of the ARS project will carry out the demonstration of the autonomous robotic surgery and will address all the issues related to the experimental set up and the intervention demonstrations.

We will implement a sequence of verification, validation and benchmarking tests to assess the quality of the solutions developed. We will also address issues related to hardware set up, safety, and system security, by developing a fault detection system of the surgical robot, and a security protection for its network connection.

Tasks

This Research Line is divided into five tasks:

- hardware set up and improvements;

- system integration and testing;

- fault detection and isolation;

- cyber security;

- and then testing and demonstrations of the complete system.

WP6: Management and dissemination

This work package is responsible to manage the results of the project, storage and analysis of produced data, ethical requirements and dissemination actions.

Great attention will be given to the project dissemination, especially because the concept of autonomous robots has high public interest and their application to health care can generate concerns in the public opinion. Therefore, every effort will be spent in conveying the message that the technologies developed by the ARS project will result in improvements of healthcare. We will address standard media such as the Internet and printed news, leaflets and we will prepare video fragments and professional videos to illustrate the project ideas to different audiences. Special dissemination actions will be directed to the medical community to get feedback and to promote their participation to the project activities.

The ARS project will focus on robotic surgery and will demonstrate the feasibility of autonomous surgery in a complete surgical procedure.